Previously by just crawling the rendered HTML the page would be deemed as indexable when in reality Google will see the noindex in the original HTML first, and subsequently skip rendering, meaning the removal of the noindex won’t be seen and the page won’t be indexed. The two-phase approach of crawling the raw and rendered HTML can help pick up on easy to miss problematic scenarios, such as the original HTML having a noindex meta tag, but the rendered HTML not having one. This can be useful when determining whether all elements are only in the rendered HTML, or if JavaScript is used on selective elements.

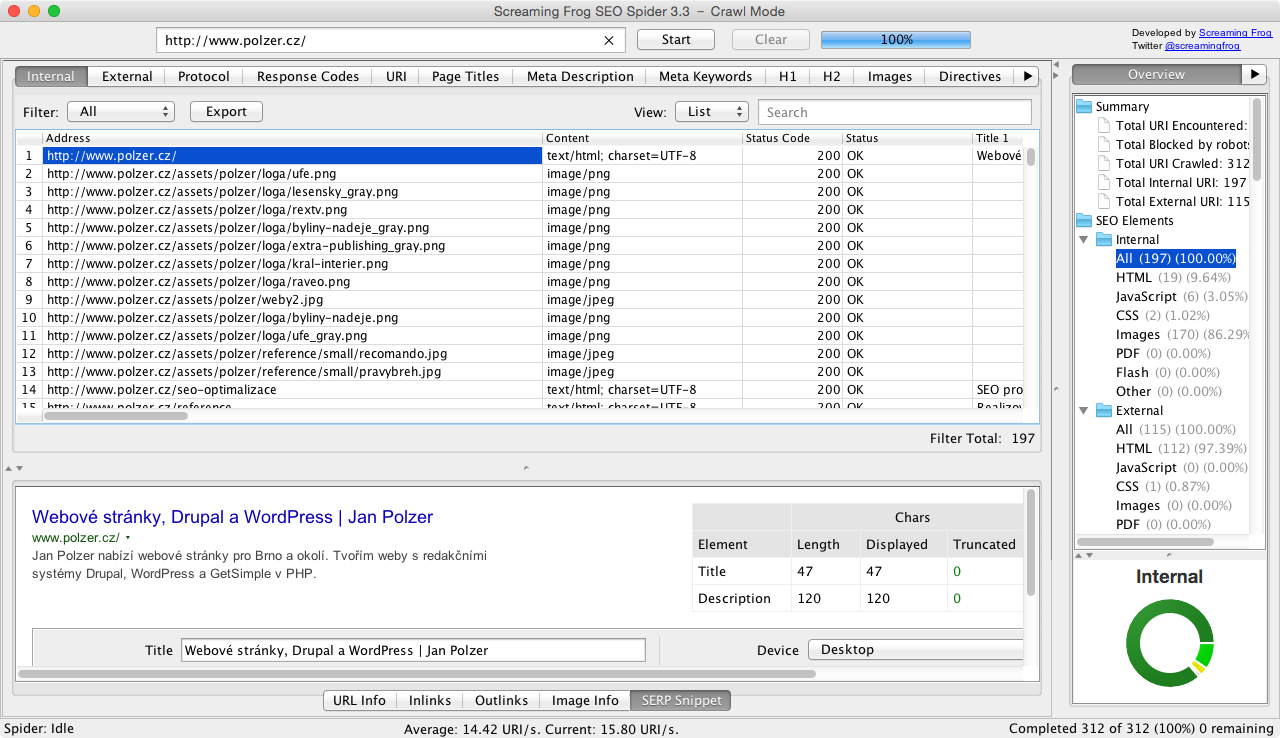

Both the original and rendered HTML versions can be viewed simultaneously. The updated tab will tell you if page titles, descriptions, headings, meta robots or canonicals depend upon or have been updated by JavaScript. You can bulk export all links that rely on JavaScript via ‘Bulk Export > JavaScript > Contains JavaScript Links’. For example, products loaded on a category page using JavaScript will only be in the ‘rendered HTML’. There’s a new ‘link origin’ column and filter in the lower window ‘Outlinks’ (and inlinks) tab to help you find exactly which links are only in the rendered HTML of a page due to JavaScript. Pages that have JavaScript links are reported and the counts are shown in columns within the tab. You’re able to clearly see which pages have JavaScript content only available in the rendered HTML post JavaScript execution.įor example, our homepage apparently has 4 additional words in the rendered HTML, which was new to us.īy storing the HTML and using the lower window ‘View Source’ tab, you can also switch the filter to ‘Visible Text’ and tick ‘Show Differences’, to highlight which text is being populated by JavaScript in the rendered HTML.Īha! There are the 4 words. This is more in line with how Google crawls and can help identify JavaScript dependencies, as well as other issues that can occur with this two-phase approach. One of the fundamental changes in this update is that the SEO Spider will now crawl both the original and rendered HTML to identify pages that have content or links only available client-side and report other key differences. This will only populate in JavaScript rendering mode, which can be enabled via ‘Config > Spider > Rendering’. The old ‘AJAX’ tab, has been updated to ‘JavaScript’, and it now contains a comprehensive list of filters around common issues related to auditing websites using client-side JavaScript.

Since the launch of crawl comparison in version 15, we’ve been busy working on the next round of prioritised features and enhancements.ĥ years ago we launched JavaScript rendering, as the first crawler in the industry to render web pages, using Chromium (before headless Chrome existed) to crawl content and links populated client-side using JavaScript.Īs Google, technology and our understanding as an industry has evolved, we’ve updated our integration with headless Chrome to improve efficiency, mimic the crawl behaviour of Google closer, and alert users to more common JavaScript-related issues. We’re excited to announce Screaming Frog SEO Spider version 16.0, codenamed internally as ‘marshmallow’.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed